C Programming Training Classes in Bismarck, North Dakota

Learn C Programming in Bismarck, NorthDakota and surrounding areas via our hands-on, expert led courses. All of our classes either are offered on an onsite, online or public instructor led basis. Here is a list of our current C Programming related training offerings in Bismarck, North Dakota: C Programming Training

C Programming Training Catalog

Course Directory [training on all levels]

- .NET Classes

- Agile/Scrum Classes

- Ajax Classes

- Android and iPhone Programming Classes

- Blaze Advisor Classes

- C Programming Classes

- C# Programming Classes

- C++ Programming Classes

- Cisco Classes

- Cloud Classes

- CompTIA Classes

- Crystal Reports Classes

- Design Patterns Classes

- DevOps Classes

- Foundations of Web Design & Web Authoring Classes

- Git, Jira, Wicket, Gradle, Tableau Classes

- IBM Classes

- Java Programming Classes

- JBoss Administration Classes

- JUnit, TDD, CPTC, Web Penetration Classes

- Linux Unix Classes

- Machine Learning Classes

- Microsoft Classes

- Microsoft Development Classes

- Microsoft SQL Server Classes

- Microsoft Team Foundation Server Classes

- Microsoft Windows Server Classes

- Oracle, MySQL, Cassandra, Hadoop Database Classes

- Perl Programming Classes

- Python Programming Classes

- Ruby Programming Classes

- Security Classes

- SharePoint Classes

- SOA Classes

- Tcl, Awk, Bash, Shell Classes

- UML Classes

- VMWare Classes

- Web Development Classes

- Web Services Classes

- Weblogic Administration Classes

- XML Classes

- RED HAT ENTERPRISE LINUX AUTOMATION WITH ANSIBLE

3 June, 2024 - 6 June, 2024 - OpenShift Fundamentals

24 June, 2024 - 26 June, 2024 - Introduction to C++ for Absolute Beginners

20 May, 2024 - 21 May, 2024 - Docker

22 July, 2024 - 24 July, 2024 - ASP.NET Core MVC, Rev. 6.0

19 August, 2024 - 20 August, 2024 - See our complete public course listing

Blog Entries publications that: entertain, make you think, offer insight

Over time, companies are migrating from COBOL to the latest standard of C# solutions due to reasons such as cumbersome deployment processes, scarcity of trained developers, platform dependencies, increasing maintenance fees. Whether a company wants to migrate to reporting applications, operational infrastructure, or management support systems, shifting from COBOL to C# solutions can be time-consuming and highly risky, expensive, and complicated. However, the following four techniques can help companies reduce the complexity and risk around their modernization efforts.

All COBOL to C# Solutions are Equal

It can be daunting for a company to sift through a set of sophisticated services and tools on the market to boost their modernization efforts. Manual modernization solutions often turn into an endless nightmare while the automated ones are saturated with solutions that generate codes that are impossible to maintain and extend once the migration is over. However, your IT department can still work with tools and services and create code that is easier to manage if it wants to capitalize on technologies such as DevOps.

Narrow the Focus

Most legacy systems are incompatible with newer systems. For years now, companies have passed legacy systems to one another without considering functional relationships and proper documentation features. However, a detailed analysis of databases and legacy systems can be useful in decision-making and risk mitigation in any modernization effort. It is fairly common for companies to uncover a lot of unused and dead code when they analyze their legacy inventory carefully. Those discoveries, however can help reduce the cost involved in project implementation and the scope of COBOL to C# modernization. Research has revealed that legacy inventory analysis can result in a 40% reduction of modernization risk. Besides making the modernization effort less complex, trimming unused and dead codes and cost reduction, companies can gain a lot more from analyzing these systems.

Understand Thyself

For most companies, the legacy system entails an entanglement of intertwined code developed by former employees who long ago left the organization. The developers could apply any standards and left behind little documentation, and this made it extremely risky for a company to migrate from a COBOL to C# solution. In 2013, CIOs teamed up with other IT stakeholders in the insurance industry in the U.S to conduct a study that found that only 18% of COBOL to C# modernization projects complete within the scheduled period. Further research revealed that poor legacy application understanding was the primary reason projects could not end as expected.

Furthermore, using the accuracy of the legacy system for planning and poor understanding of the breadth of the influence of the company rules and policies within the legacy system are some of the risks associated with migrating from COBOL to C# solutions. The way an organization understands the source environment could also impact the ability to plan and implement a modernization project successfully. However, accurate, in-depth knowledge about the source environment can help reduce the chances of cost overrun since workers understand the internal operations in the migration project. That way, companies can understand how time and scope impact the efforts required to implement a plan successfully.

Use of Sequential Files

Companies often use sequential files as an intermediary when migrating from COBOL to C# solution to save data. Alternatively, sequential files can be used for report generation or communication with other programs. However, software mining doesn’t migrate these files to SQL tables; instead, it maintains them on file systems. Companies can use data generated on the COBOL system to continue to communicate with the rest of the system at no risk. Sequential files also facilitate a secure migration path to advanced standards such as MS Excel.

Modern systems offer companies a range of portfolio analysis that allows for narrowing down their scope of legacy application migration. Organizations may also capitalize on it to shed light on migration rules hidden in the ancient legacy environment. COBOL to C# modernization solution uses an extensible and fully maintainable code base to develop functional equivalent target application. Migration from COBOL solution to C# applications involves language translation, analysis of all artifacts required for modernization, system acceptance testing, and database and data transfer. While it’s optional, companies could need improvements such as coding improvements, SOA integration, clean up, screen redesign, and cloud deployment.

Information Technology is one of the most dynamic industries with new technologies surfacing frequently. In such a scenario, it can get intimidating for information technology professionals at all levels to keep abreast of the latest technology innovations worth investing time and resources into.

It can therefore get daunting for entry and mid-level IT professionals to decide which technologies they should potentially be developing skills. However, the biggest challenge comes for senior information technology professionals responsible for driving the IT strategy in their organizations.

It is therefore important to keep abreast of the latest technology trends and get them from reputable sources. Here are some of the ways to keep on top of the latest trends in Information Technology.

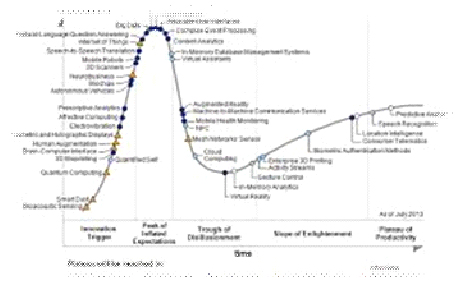

· Subscribe to leading Analyst Firms: If you work for a leading IT organization, chances are that you already have subscription to leading IT analyst firms notably Gartner and Forrester. These two firms are some of the most recognized analyst firms with extensive coverage on almost every enterprise technology including hardware and software. These Analyst firms frequently publish reports on global IT spending and trends that are based on primary research conducted on vendors and global CIOs & CTOs. However, subscription to these reports is very expensive and if you are a part of a small organization you may have issues securing access to these reports. One of the most important pieces of research published by these firms happens to be the Gartner Hype Cycle which plots leading technologies and their maturity curve.Even if you do not have access to Gartner research, you can hack your way by searching for “Gartner Hype Cycle” on Google Images and you will in most cases be able to see the plots similar to the one below

Millions of people experienced the frustration and failures of the Obamacare website when it first launched. Because the code for the back end is not open source, the exact technicalities of the initial failings are tricky to determine. Many curious programmers and web designers have had time to examine the open source coding on the front end, however, leading to reasonable conclusions about the nature of the overall difficulties.

Millions of people experienced the frustration and failures of the Obamacare website when it first launched. Because the code for the back end is not open source, the exact technicalities of the initial failings are tricky to determine. Many curious programmers and web designers have had time to examine the open source coding on the front end, however, leading to reasonable conclusions about the nature of the overall difficulties.

Lack of End to End Collaboration

The website was developed with multiple contractors for the front-end and back-end functions. The site also needed to be integrated with insurance companies, IRS servers, Homeland Security servers, and the Department of Veterans Affairs, all of whom had their own legacy systems. The large number of parties involved and the complex nature of the various components naturally complicated the testing and integration of each portion of the project.

The errors displayed, and occasionally the lack thereof, indicated an absence of coordination between the parties developing the separate components. A failed sign up attempt, for instance, often resulted in a page that displayed the header but had no content or failure message. A look at end user requests revealed that the database was unavailable. Clearly, the coding for the front end did not include errors for failures on the back end.

Bloat and the Abundance of Minor Issues

Obviously, numerous bugs were also an issue. The system required users to create passwords that included numbers, for example, but failed to disclose that on the form and in subsequent failure messages, leaving users baffled. In another issue, one of the pages intended to ask users to please wait or call instead, but the message and the phone information were accidentally commented out in the code.

While the front-end design has been cleared of blame for the most serious failures, bloat in the code did contribute to the early difficulties users experienced. The site design was heavy with Javascript and CSS files, and it was peppered with small coding errors that became particularly troublesome when users faced bottlenecks in traffic. Frequent typos throughout the code proved to be an additional embarrassment and were another indication of a troubled development process.

NoSQL Database

The NoSQL database is intended to allow for scalability and flexibility in the architecture of projects that will use it. This made NoSQL a logical choice for the health insurance exchange website. The newness of the technology, however, means personnel with expertise can be elusive. Database-related missteps were more likely the result of a lack of experienced administrators than with the technology itself. The choice of the NoSQL database was thus another complication in the development, but did not itself cause the failures.

Another factor of consequence is that the website was built with both agile and waterfall methodology elements. With agile methods for the front end and the waterfall methodology for the back end, streamlining was naturally going to suffer further difficulties. The disparate contractors, varied methods of software development, and an unrealistically short project time line all contributed to the coding failures of the website.

The original article was posted by Michael Veksler on Quora

A very well known fact is that code is written once, but it is read many times. This means that a good developer, in any language, writes understandable code. Writing understandable code is not always easy, and takes practice. The difficult part, is that you read what you have just written and it makes perfect sense to you, but a year later you curse the idiot who wrote that code, without realizing it was you.

The best way to learn how to write readable code, is to collaborate with others. Other people will spot badly written code, faster than the author. There are plenty of open source projects, which you can start working on and learn from more experienced programmers.

Readability is a tricky thing, and involves several aspects:

- Never surprise the reader of your code, even if it will be you a year from now. For example, don’t call a function max() when sometimes it returns the minimum().

- Be consistent, and use the same conventions throughout your code. Not only the same naming conventions, and the same indentation, but also the same semantics. If, for example, most of your functions return a negative value for failure and a positive for success, then avoid writing functions that return false on failure.

- Write short functions, so that they fit your screen. I hate strict rules, since there are always exceptions, but from my experience you can almost always write functions short enough to fit your screen. Throughout my carrier I had only a few cases when writing short function was either impossible, or resulted in much worse code.

- Use descriptive names, unless this is one of those standard names, such as i or it in a loop. Don’t make the name too long, on one hand, but don’t make it cryptic on the other.

- Define function names by what they do, not by what they are used for or how they are implemented. If you name functions by what they do, then code will be much more readable, and much more reusable.

- Avoid global state as much as you can. Global variables, and sometimes attributes in an object, are difficult to reason about. It is difficult to understand why such global state changes, when it does, and requires a lot of debugging.

- As Donald Knuth wrote in one of his papers: “Early optimization is the root of all evil”. Meaning, write for readability first, optimize later.

- The opposite of the previous rule: if you have an alternative which has similar readability, but lower complexity, use it. Also, if you have a polynomial alternative to your exponential algorithm (when N > 10), you should use that.

Use standard library whenever it makes your code shorter; don’t implement everything yourself. External libraries are more problematic, and are both good and bad. With external libraries, such as boost, you can save a lot of work. You should really learn boost, with the added benefit that the c++ standard gets more and more form boost. The negative with boost is that it changes over time, and code that works today may break tomorrow. Also, if you try to combine a third-party library, which uses a specific version of boost, it may break with your current version of boost. This does not happen often, but it may.

Don’t blindly use C++ standard library without understanding what it does - learn it. You look at std::vector::push_back()std::mapstd::unordered_map

Never call newdeletestd::make_uniqueusique_ptr, shared_ptr, weak_ptr

Every time you look at a new class or function, in boost or in std, ask yourself “why is it done this way and not another?”. It will help you understand trade-offs in software development, and will help you use the right tool for your job. Don’t be afraid to peek into the source of boost and the std, and try to understand how it works. It will not be easy, at first, but you will learn a lot.

Know what complexity is, and how to calculate it. Avoid exponential and cubic complexity, unless you know your N is very low, and will always stay low.

Learn data-structures and algorithms, and know them. Many people think that it is simply a wasted time, since all data-structures are implemented in standard libraries, but this is not as simple as that. By understanding data-structures, you’d find it easier to pick the right library. Also, believe it or now, after 25 years since I learned data-structures, I still use this knowledge. Half a year ago I had to implemented a hash table, since I needed fast serialization capability which the available libraries did not provide. Now I am writing some sort of interval-btree, since using std::map, for the same purpose, turned up to be very very slow, and the performance bottleneck of my code.

Notice that you can’t just find interval-btree on Wikipedia, or stack-overflow. The closest thing you can find is Interval tree, but it has some performance drawbacks. So how can you implement an interval-btree, unless you know what a btree is and what an interval-tree is? I strongly suggest, again, that you learn and remember data-structures.

These are the most important things, which will make you a better programmer. The other things will follow.

Tech Life in North Dakota

training details locations, tags and why hsg

The Hartmann Software Group understands these issues and addresses them and others during any training engagement. Although no IT educational institution can guarantee career or application development success, HSG can get you closer to your goals at a far faster rate than self paced learning and, arguably, than the competition. Here are the reasons why we are so successful at teaching:

- Learn from the experts.

- We have provided software development and other IT related training to many major corporations in North Dakota since 2002.

- Our educators have years of consulting and training experience; moreover, we require each trainer to have cross-discipline expertise i.e. be Java and .NET experts so that you get a broad understanding of how industry wide experts work and think.

- Discover tips and tricks about C Programming programming

- Get your questions answered by easy to follow, organized C Programming experts

- Get up to speed with vital C Programming programming tools

- Save on travel expenses by learning right from your desk or home office. Enroll in an online instructor led class. Nearly all of our classes are offered in this way.

- Prepare to hit the ground running for a new job or a new position

- See the big picture and have the instructor fill in the gaps

- We teach with sophisticated learning tools and provide excellent supporting course material

- Books and course material are provided in advance

- Get a book of your choice from the HSG Store as a gift from us when you register for a class

- Gain a lot of practical skills in a short amount of time

- We teach what we know…software

- We care…